Specifically, the following three experiments are conducted. In addition, the model with the arbitrary number of interpolations like SuperSLoMo is also tested with one interpolation in order to put it into the framework. For the evaluation, the test-split of VimeoSeptuplet and Middlebury-others, HD are used. As the training data for meta-learning, a train-split of VimeoSeptuplet is used. In this paper, the experiments of meta-learning are performed on four models, DVF, SuperSloMo, SepConv, and DAIN, as baselines. You can see that the above losses are applied to the MAML framework, and the parameters are updated. The following figure shows the pseudo-code written based on the above two internal and external losses. Suppose a model is $f_\theta$ ($\theta$ is a learning parameter), and $T_I$ with close frame intervals. After that, the actual parameter update is performed by the Outer loop. Specifically, MAML is a parameter update technique consisting of an inner loop and an outer loop to make the model adapt to new tasks. MAML (Model-Agnostic Meta-Learning ) is a model-independent meta-learning technique. All other images are taken from the SAVFI paper) About MAML

(Note that the images in this article are taken from the MAML paper for MAML. In this article, we will discuss this paper in detail. In this paper, the authors propose a method for meta-learning of frame interpolation models by applying the MAML framework, which has recently attracted much attention in the meta-learning community, to frame interpolation. In "Scene-Adaptive Video Frame Interpolation via Meta-Learning", a method for meta-learning of frame interpolation models is proposed. In addition, fine-tuning and other methods to solve this problem have not been studied much. However, existing highly accurate frame interpolation models are very large and require additional computation time when training new task data. Since the advent of SuperSloMo, however, models that output more than one arbitrary intermediate image have been developed. Until the advent of SuperSloMo, published by NVIDIA researchers, it was common to input two images to the model and output one intermediate image.

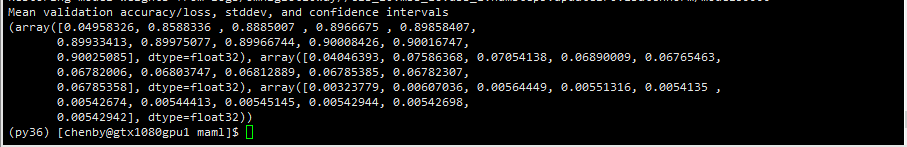

Such frame interpolation is one of the CV fields with a long history, but nowadays it is common to compute intermediate images by CNN. When converting low-fps video to high-fps video, frame interpolation is basically the core. In practice, a computer creates a continuous intermediate image from the previous and next images softly. By interpolating images between frames, a choppy image can be made smooth. Subjects: Computer Vision and Pattern Recognition (cs.CV)įrame interpolation is a technique to make images look smooth. Written by Myungsub Choi, Janghoon Choi, Sungyong Baik, Tae Hyun Kim, Kyoung Mu Lee ✔️ Improved PSNR values over baseline in various frame interpolation models with light computational complexity Scene-Adaptive Video Frame Interpolation via Meta-Learning ✔️ Suggest new MAML findings to change data distribution in Innerloop and Outerloop Meta-training a single vector to initialize all the $N$ weight vectors in theĬlassification head performs the best.✔️ Apply MAML framework to frame interpolation to respond quickly to new scenes Investigate several approaches to make MAML permutation-invariant, among which We find that these permutations lead to a huge variance ofĪccuracy, making MAML unstable in few-shot classification.

Then have "$N!$" different permutations to be paired with a few-shot task of Second, we find that MAML is sensitive to the class Gradient steps in its inner loop update, which contradicts its common usage inįew-shot classification. First, we find that MAML needs a large number of In this paper, we point out several key facets of how to train MAML to excel inįew-shot classification. Nevertheless, its performance on few-shotĬlassification is far behind many recent algorithms dedicated to the problem. Download a PDF of the paper titled How to Train Your MAML to Excel in Few-Shot Classification, by Han-Jia Ye and 1 other authors Download PDF Abstract: Model-agnostic meta-learning (MAML) is arguably one of the most popular

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed